Citrix MCS on Nutanix AHV: Unleashing the power of clones!

3 min read

With the release of the plugin for Citrix MCS on Nutanix AHV I got a lot of questions on the inner workings of the AHV cloning so here goes: a blog post that (hopefully) helps you getting a grasp on how Nutanix AHV uses clones in Citrix MCS.

With the release of the plugin for Citrix MCS on Nutanix AHV I got a lot of questions on the inner workings of the AHV cloning so here goes: a blog post that (hopefully) helps you getting a grasp on how Nutanix AHV uses clones in Citrix MCS.

As explained in previous articles Nutanix solves data locality issues while using vSphere and/or Hyper-V by implementing Shadow Clones:

- How Nutanix helps Citrix MCS with Shadow Clones

- Overcoming the 3 biggest challenges with Citrix MCS

- Citrix MCS for AHV: Under the hood

The question that I got: ‘Will Shadow Clones help with Citrix MCS on Nutanix AHV too?’

The short answer to this: ‘No’. The longer answer to this question is that shadow clones is basically built to achieve data locality for multireader scenarios like linked clone technologies as MCS and the View Composer offer which is not the case for Nutanix AHV.

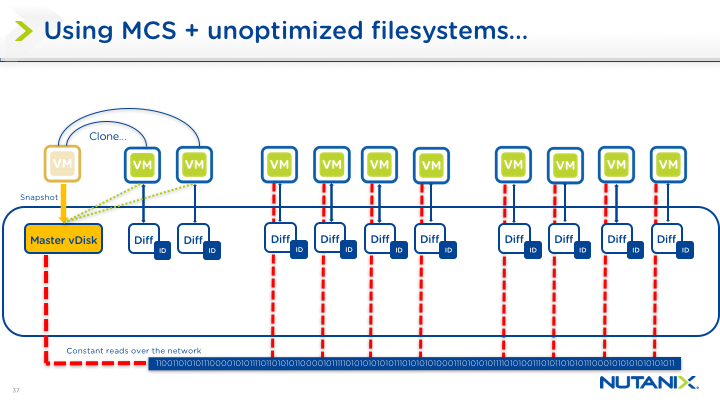

How does this Shadow Clones thing work again? Here’s an image of one of the slides of the slide deck I presented with Martijn Bosschaart at Citrix Synergy (SYN219):

MCS in legacy infrastructure scenarios:

Key take aways on this image:

- All reads will traverse the network, congesting your network.

- Creating a hotspot of reads on your centralised storage.

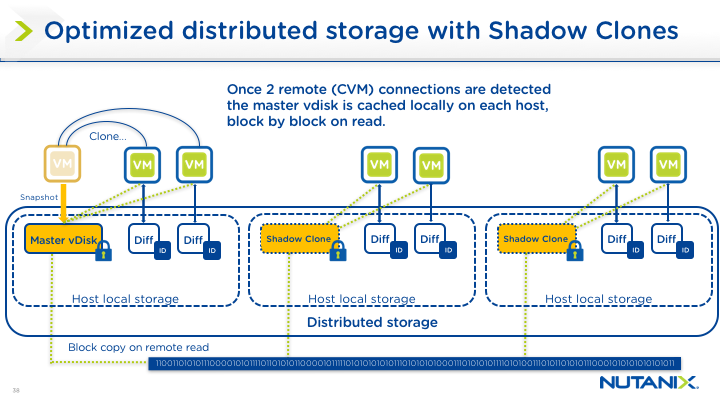

MCS on Nutanix with vSphere or Hyper-V:

Key take aways on this image:

- All reads and writes will stay local, enhancing performance for the user.

- The reads are no longer traversing the network which opens up more bandwidth for other workloads.

- Avoiding a hotspot of reads on your centralised storage.

I can almost hear you think “So why won’t Shadow Clones help with AHV?” First of all, it’s because of the cloning mechanism in AHV: AHV’s cloning is super-fast as it does a VM metadata clone and a disk snapshot. Secondly it’s because AHV is using soft pointers to the master disk, this master disk can be accessed locally to the node because of our implementation of cache locality/extent locality and metadata.

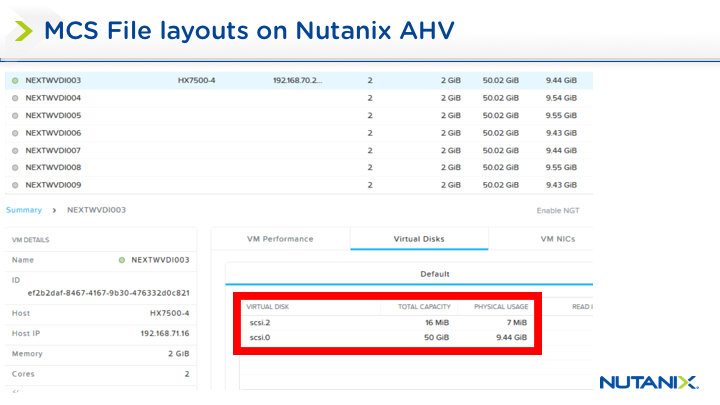

That being said, let’s take a quick look at the disk layout on Nutanix AHV:

MCS-based VM disk layout on AHV:

Key takeaways on this image:

- Nutanix AHV only has 2 disks: the identity disk and the differencing disk.

- It pre-populates some of the assigned storage.

The secret sauce here is Copy on Read, this mechanism will cache the reads local to the VM after the first read hitting the master image while this is very storage efficient due to the cache/extend locality it could potentially consume more storage but our data reduction technologies will mitigate this; Michael Webster has some real world examples posted on his blog on the savings you can get in this blogpost:

http://longwhiteclouds.com/2016/05/23/real-world-data-reduction-from-hybrid-ssd-hdd-storage/

Concluding:

This solution provides data locality out of the box when it comes down to the cloning mechanisms used by Citrix MCS on Nutanix AHV. If we combine this with the powers of the storage-native actions that we have available on Nutanix AHV with Acropolis and Prism we get a Citrix XenDesktop-on-Nutanix solution that provides a single, high-density platform for desktop and application delivery. This modular, pod-based approach also enables deployments to scale simply and efficiently with zero downtime.

Kees Baggerman

Latest posts by Kees Baggerman (see all)

- Citrix MCSIO on Nutanix AHV: A Solution to a Problem That Doesn’t Exist - June 10, 2026

- The Cloud Desktop CPU Lottery: What Are You Actually Running On? - June 2, 2026

- Your Cloud Desktop Is Running on Yesterday’s Silicon - May 21, 2026

- When the Orchard Ships Production Software: AI-Augmented Development in the Real World - May 17, 2026

- Nutanix Documentation Script v5.0: Visual Reports, Brand Templates, and Seven Embedded Diagrams - May 15, 2026

2 Comments